A verified, in-depth guide to the eight frameworks reshaping how AI is built and deployed from stateful agent orchestration and TPU-native training to Google’s full enterprise stack overhaul at Cloud Next 2026.

Formerly AI development meant training a model on a dataset and hoping for the best outcome. In 2026, the architecture looks radically different with developers are orchestrating fleets of autonomous agents that can plan, reason, delegate, and act for various domains. The framework we choose has consequential impact on the language we code in, reasoning behind the models, motivation of operations and choosing wrong framework could mean rebuilding the model in shorter period.

This guide cuts through the noise as we researched eight frameworks worth your attention. These frameworks have verified benchmarks and stress-tested real-world claims. Let’s deep dive.

1. LangGraph (Stateful AI Orchestrator)

LangChain’s LangGraph is best described as a deterministic execution engine for AI reasoning workflows. It is not a chatbot library, not prompt glue, but a control system for LLMs. It models agent workflows as directed cyclic graphs: nodes are reasoning or action steps, edges define control flow, and a shared state object (a TypedDict) serves as the agent’s persistent memory across every step. As state is explicit and immutable at each transition, LangGraph can checkpoint, replay, and audit every decision the agent makes. The distinction that matters at production scale is LLMs generate text and LangGraph governs behavior.

In production benchmark comparisons conducted in Q1 2026, LangGraph completed 62% of complex multi-step tasks — the highest rate among tested frameworks. It uses roughly 15% fewer tokens per query than plain LangChain due to explicit state management that avoids redundant context passing. Notably, LangGraph surpassed CrewAI in GitHub stars during early 2026, driven by enterprise adoption. It is backed by Benchmark and Sequoia Capital, and is used in production by companies including LinkedIn, Klarna, Replit, and Elastic.

Key Features: directed cyclic graphs, stateful memory, human-in-the-loop, LangSmith tracing, Postgres Save, Python / TypeScript

Best for: Production systems requiring audit trails, rollback, human approval gates, and complex conditional routing. If your agent needs to survive server restarts or run for days without losing state, LangGraph is the most robust choice on the market.

Technical Aspects:

Core architecture: Framework can build state machine with conditional edges, loops, and checkpoint persistence (SQLite, Postgres). Supports sub-graphs with isolated state schemas.

Observability: LangSmith traces every node transition, state mutation, and cost per node. Attach custom metadata like criticscore and token budget used.

LLM integrations: Seamless integration with 100+ LLMs, vector stores, and data loaders via the LangChain ecosystem.

Known limitations: Steep learning curve; a simple ReAct agent takes ~120 lines vs ~40 in Smolagents. Two CVEs found in 2025–2026 (CVSS 9.3 deserialization, 7.3 SQLite injection).

2. CrewAI (Multi-Agent AI Teams)

CrewAI makes multi-agent systems feel like assembling a human team. You define agents with roles, goals, and backstories, then CrewAI infers coordination patterns between them. This abstraction makes it dramatically faster to prototype: 2026 benchmarks show CrewAI achieves 40% faster development time than LangGraph and a 45s vs 68s latency advantage on identical 5-step research workflows. The tradeoff is token overhead with CrewAI consumes roughly 18% more tokens per run due to role/backstory prompt definitions. CrewAI is Python-based framework to automate AI multiagent workflow and designed to be LLM agnostic allowing framework can work with providers like OpenAI, Anthropic, and Mistral.

CrewAI v1.10.1 introduced native MCP (Model Context Protocol) support, collapsing the boundary between agent frameworks and external tool ecosystems. The framework also integrates natively with A2A (Agent-to-Agent) protocol, allowing CrewAI agents to hand off tasks to agents running on Google, Salesforce, or ServiceNow platforms. Real-world deployments include marketing automation (competitor tracking + content drafting + scheduling as a coordinated crew), HR workflows, and supply chain monitoring.

Key features: Role-based agents, MCP native v1.10.1A2A, protocol crew orchestration, python

Best for: Marketing teams, research departments, and SMBs without dedicated AI engineering teams. The recommended starting point in 2026: validate your use case with CrewAI, then migrate to LangGraph if you need production-grade state management and failure resilience.

Technical Aspects:

Strength: Fastest path from idea to working multi-agent prototype. Natural language role definitions lower the floor significantly.

Weakness: Failure handling is weak: when one agent throws an exception, the entire crew stops or retries unpredictably. No equivalent of LangGraph’s conditional edge routing for failures.

Memory: Per-crew memory management — unsuitable for agents that need to run for more than a day without a restart.

Ecosystem: MCP v1.10.1 connects to 422+ apps. A2A support enables cross-vendor agent handoffs without custom pipelines.

3. Microsoft AutoGen (Enterprise / Research AI Assistant)

AutoGen is Microsoft’s framework for building conversational multi-agent systems where agents communicate with each other through structured dialogue. Unlike LangGraph’s graph model or CrewAI’s crew metaphor, AutoGen’s core abstraction is conversational: agents send messages to each other, negotiate plans, and coordinate actions through a shared message bus. This makes it uniquely suited for research-style workflows where emergent agent behaviors and experimental coordination patterns matter more than execution determinism.

AutoGen maintains a large install base sustained by Microsoft’s early push into multi-agent research and deep Azure ecosystem integration. Native A2A support was added in 2026, enabling AutoGen agents to participate in cross-vendor agent networks. The framework’s conversational model excels at building customer support systems where multiple specialized agents handle different query types in parallel, each routing to its domain of expertise.

Key Features: Conversational agents, A2A protocol, Azure ecosystem, multi-agent dialogue, Python

Best for: Research projects, experimental agent behaviors, academic work, and organizations already running Azure infrastructure. For straightforward production deployments, it requires more custom engineering than LangGraph or CrewAI.

Technical Aspect:

Unique capability: Agents can critique each other’s outputs in structured dialogue, making it ideal for peer-review-style workflows (e.g. code review with a critic agent).

Production path: Moving from research to production often requires significant custom infrastructure. Not as turnkey as LangGraph Platform’s managed deployment.

4. LlamaIndex (RAG & Retrieval Layer)

LlamaIndex occupies a distinct layer in the AI stack: it’s not an orchestration framework — it’s the data retrieval foundation that orchestration frameworks sit on top of. It specializes in connecting LLMs to private, structured knowledge bases through vector indexing, document parsing, retrieval-augmented generation (RAG), and hybrid search. By 2026, LlamaIndex Agents has been extended with native A2A protocol support, allowing retrieval agents to participate in cross-platform agent networks and hand off enriched context to orchestration layers like LangGraph or CrewAI.

The architectural pattern that has emerged as the production standard in 2026 is: LlamaIndex handles the data retrieval layer → LangGraph or CrewAI handles the agent orchestration above it. LlamaIndex itself recommends this split. Its performance shines in applications like legal document analysis, enterprise knowledge search, research assistants, and multi-hop reasoning across large corpora that can’t fit in a context window.

Key Features: RAG pipelines, vector search, document indexing, multi-hop reasoning, A2A support

Best for: Any application where answer quality is bottlenecked by retrieval accuracy rather than reasoning. Combine with LangGraph or CrewAI for the orchestration layer above it.

Technical Aspect:

Why RAG still matters: Even with 1M+ token context windows in 2026, LlamaIndex is essential when knowledge bases contain millions of live documents or require real-time database queries.

Retrieval quality: Supports BEIR benchmark evaluation suite (nDCG@10), reranking, and chunk-score monitoring for continuous retrieval quality measurement.

5. Hugging Face Transformers (Open Models Hub)

Hugging Face provides the largest publicly available model hub, over 100,000 models covering every AI task from NLP to computer vision to audio. In 2026, Transformers Agents extends this into agentic workflows and Hugging Face’s Smolagents (a newer entrant from the same organization) has shown the steepest relative growth rate of any agent framework this year. Smolagents’ differentiation is its code-first action mechanism: instead of calling predefined JSON tool functions, it writes and executes Python code as its primary action, making it dramatically more flexible for novel tool use.

Hugging Face’s critical differentiator remains its on-premises capability. For healthcare, finance, legal, and government applications where data cannot leave organizational infrastructure, cloud API providers like OpenAI or Anthropic are simply not an option. Hugging Face eliminates that constraint entirely. The JAX-Privacy 1.0 library, built on top of JAX and tightly integrated with Hugging Face’s Gemma model family, enables fine-tuning large models with differential privacy guarantees essential for regulated industries handling sensitive personal data.

Key features: 100k+ models, Smolagents, demo apps, open datasets, differential privacy, code-first agents, no vendor lock-in

Best for: Privacy-sensitive industries, teams avoiding vendor lock-in, and researchers who need maximum model variety. Smolagents is the fastest path to a working open-source agent in 2026.

Technical Aspects:

Smolagents advantage: A simple ReAct agent takes ~40 lines vs LangGraph’s ~120. Generates executable Python code instead of JSON tool calls enables broader tool coverage with less boilerplate.

Privacy tooling: JAX-Privacy 1.0 enables DP training with vmap and shard_map for distributed environments. First-class Gemma fine-tuning support.

6. Microsoft Semantic Kernel (Enterprise SDK)

Semantic Kernel is Microsoft’s SDK for embedding AI capabilities directly into existing enterprise applications — a fundamentally different goal than LangGraph or CrewAI. Where those frameworks build agent-first systems from scratch, Semantic Kernel is designed to augment what already exists: connecting AI reasoning to CRM systems, ERP pipelines, Microsoft 365, SharePoint, and Teams workflows through a plugin architecture that maps cleanly onto existing enterprise data models. It provides flexibility, modularity and observability to your systems.

The framework supports both Python and C#, giving it unique reach into enterprise codebases that are heavily .NET-based. Its plugin system lets developers define ‘skills’ that is, develop discrete AI capabilities composed into larger workflows and connect these skills directly to enterprise service APIs without building custom adapter layers. For organizations already committed to the Azure stack, Semantic Kernel reduces time-to-deployment significantly compared to framework-agnostic alternatives. Native A2A protocol support was added in 2026, enabling Semantic Kernel agents to participate in cross-platform multi-agent networks alongside LangGraph, CrewAI, and Google ADK agents.

Key feature: plugin architecture, Microsoft 365 native, C# + Python, A2A protocol, Azure integration, telemetry support

Best for: Enterprises embedding AI into Microsoft-stack applications. Not the right choice for greenfield agent systems — it shines brightest when augmenting infrastructure that already exists.

Technical Aspect:

Plugin system: Skills map AI functions to enterprise APIs. Compose skills into pipelines that integrate with SharePoint, Teams, and Dynamics 365 natively.

Best fit test: If your team want to add AI to our existing Microsoft app. Now you can. This enables to integrate latest AI models into your C#, Python, or Java codebase.

7. Google JAX (Research & Training AI models)

JAX occupies a distinct tier from every other framework in this article. It is not an agent orchestration tool but it is a high-performance numerical computing library for the training and fine-tuning layer of AI, where the actual model weights are produced. Developed by Google with contributions from NVIDIA and the open-source community, JAX brings together NumPy-style syntax, automatic differentiation (via autograd), and OpenXLA’s XLA compiler for just-in-time compilation across CPU, GPU, and TPU. Its composable function transformations — including vmap, jit, grad, and shard_map — can be nested and composed freely, which is what makes it uniquely suited for advanced research and large-scale distributed training.

In 2026, JAX is the framework of choice for building and training frontier foundation models, not just at Google. Confirmed users of JAX for foundation model training include Anthropic, xAI, and Apple alongside Google DeepMind. The JAX AI Stack is built on the philosophy of modular, loosely coupled components that now provides a mature production path through four integrated layers: Flax (neural network authoring), Optax (composable optimizers), Orbax (distributed checkpointing), and MaxText/MaxDiffusion (LLM and diffusion model training). JAX is considered one of the fastest-growing ML frameworks of 2026, with rising adoption in scientific computing, physics-informed ML, and quantum chemistry in addition to deep learning.

Key Features: XLA JIT compilation, TPU-native, vmap / shardmap, Flax + Optax + Orbax, MaxText, Tunix (post-training), Library com

Best for: Teams training or fine-tuning foundation models at scale, especially on Google Cloud TPUs. The leading framework for research in scientific ML and any domain requiring higher-order automatic differentiation. Not an individual agent orchestration tool but pair it with LangGraph or CrewAI for the inference layer.

Technical Aspect:

JAX over PyTorch for TPUs: TPU training with JAX is seamless natively. PyTorch-XLA exists but adds significant friction. JAX is the recommended path for anyone training on Google Cloud TPUs.

Functional paradigm: JAX requires pure functional programs. No mutable state — all transformations must be stateless. This is a mental shift from PyTorch but enables correctness guarantees at scale.

Post-training (Tunix): Tunix is Google’s JAX-native post-training library supporting SFT with LoRA/Q-LoRA, GRPO, GSPO, DPO, and PPO — the full alignment toolkit in one package.

Scientific computing: Taylor-mode automatic differentiation enables high-order PDE solving; completely out of reach for PyTorch or TensorFlow. Growing adoption in quantum chemistry and physics-informed ML.

JAX ecosystem at a glance: Flax (model architecture) → Optax (optimizer composition) → Orbax (distributed checkpointing with async saving) → MaxText (LLM training) → Tunix (alignment) → vLLM-TPU (inference serving). Each is a standalone library — swap components without rewriting the stack.

8. Gemini Enterprise Agent Platform (Full-Stack Cloud)

The biggest AI enterprise announcement of April 2026 happened at Google Cloud Next in Las Vegas on April 23rd. Google rebranded and expanded Vertex AI a unified AI development platform launched in 2021 into the Gemini Enterprise Agent Platform, a full-stack AI system designed around the premise for enterprises needing a single destination for building, scaling, governing, and optimizing autonomous agent networks. Google’s VP of Product, Michael Gerstenhaber, framed it simply by saying: “To move toward a truly autonomous enterprise, where agents act with the same independence and reliability as a member of your team, you need a foundation that can sustain that level of trust.”

The platform bundles four major capabilities. Agent Studio is a low-code visual interface for business users to design agents via drag-and-drop logic. The Agent Development Kit (ADK) — now at stable v1.0 in Python, Go, Java, and TypeScript — is the code-first path for engineers, featuring a new graph-based sub-agent framework and native A2A protocol support. The Model Garden provides access to 200+ foundation models including Google’s Gemini 3 family, Anthropic’s Claude (Opus, Sonnet, and Haiku as first-class citizens), Meta’s Llama, and Google’s open Gemma models. Agent Engine is the managed, serverless runtime that deploys agents at global scale. A persistent Memory Bank lets agents recall preferences, history, and context across sessions.

The platform’s A2A protocol integration is its most strategically significant feature: agents built on Vertex AI can now natively hand off tasks to agents running on Salesforce Agentforce, ServiceNow, LangGraph, CrewAI, AutoGen, LlamaIndex, and Semantic Kernel all through a standardized protocol without any of the systems needing to understand each other’s internal architecture. Google’s Apigee API management platform also functions as an MCP bridge, translating any existing API into a discoverable agent tool with existing security controls.

Key Features: Agent Studio (low-code), ADK v1.0, A2A protocol, 200+ models, Memory Bank, BigQuery native, MCP via Apigee

Best for: Large enterprises committed to Google Cloud that want a managed, governed, full-lifecycle agent platform. Not the right choice for teams that need cost predictability, are multi-cloud, or simply need an internal tool with AI features bolted on. The ADK alone is worth exploring as an open-source framework regardless of cloud commitment.

Technical Aspect:

Real customer results: Danfoss automated 80% of email order processing, cutting response times from 42 hours to near real-time. Suzano reduced SQL query time by 95% for 50,000 employees using a natural-language-to-SQL agent.

Model access: Gemini 3 Pro and Flash are the default reasoning models. Claude (Anthropic), Llama (Meta), and Gemma (Google open) are first-class alternatives in the Model Garden.

Governance layer: Model Armor inspects prompts for injection and data exfiltration. IAM controls govern which agents can access which tools and data sources.

Cost reality check: Pricing is complex and Google-Cloud-committed. Teams not already in GCP will pay a significant integration premium. The math works best when data lives in BigQuery and Workspace.

Cloud commitment: ADK is open source and can deploy to any Kubernetes environment, but you lose Memory Bank, Agent Engine, and governance layers outside Google Cloud. Evaluate total cost carefully if you’re multi-cloud.

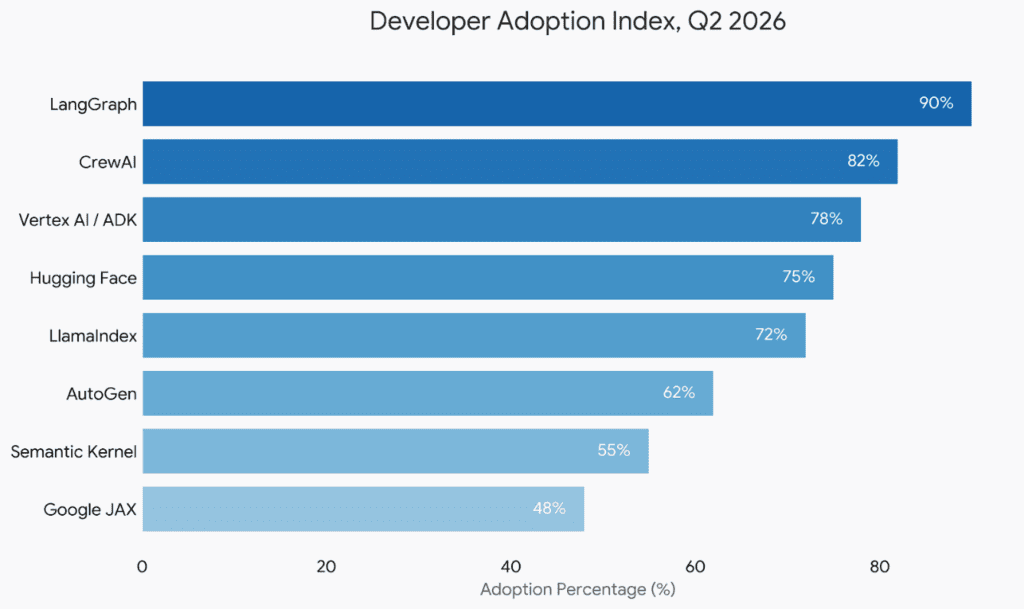

Adoption landscape, Q2 2026

Developer adoption measured across GitHub activity, enterprise deployment surveys, and community growth as of April 2026.

How to choose Right Framework

Building a new agent product? – Start with CrewAI to validate the use case. Migrate to LangGraph when you need production-grade state control and failure resilience.

Data-heavy retrieval? – Lead with LlamaIndex for the retrieval layer. Layer LangGraph or CrewAI above it for orchestration.

Training foundation models? – Google JAX + the JAX AI Stack (Flax, Optax, Orbax, MaxText, Tunix) is the highest-performance path, especially on TPUs.

Privacy-critical deployment? – Hugging Face is the only framework enabling fully on-premise inference. Non-negotiable for healthcare, finance, and government.

Microsoft-stack enterprise? – Semantic Kernel integrates deepest into Azure, Microsoft 365, and Dynamics. Reduces time-to-deployment significantly for existing stacks.

Google Cloud committed? – Gemini Enterprise Agent Platform (Vertex AI) offers the most complete managed agent lifecycle — from prototyping through governance at global scale.

Research / experimental AI? – AutoGen for multi-agent conversation experiments. JAX for any scientific computing or novel architecture research.

Cross-vendor agent networks? – A2A protocol support in 2026 is now built into LangGraph, CrewAI, LlamaIndex, AutoGen, Semantic Kernel, and Google ADK — vendor interoperability is finally real.

“By year-end 2026, expect clearer specialization: LangGraph owns the production/enterprise tier; Hugging Face Smolagents dominates the research community; CrewAI and AutoGen compete for the accessible middle ground; Vertex AI owns the managed full-stack layer.” — Pooya Golchian, AI Framework Benchmarks Report, Q1 2026

The most sophisticated teams in 2026 aren’t choosing one framework however they’re composing AI stacks. JAX trains the model. LlamaIndex indexes the data. LangGraph orchestrates the agents. CrewAI handles rapid prototyping. Vertex AI provides the governance and deployment layer. And A2A protocol binds them all together across vendors. The framework layer is where real engineering decisions live now. Start with AI architecture in mind that suits your business needs and capabilities.